Long defined by a single heuristic, the era of “just add compute” has now definitely hit a wall of diminishing returns, thinks Ilya Sutskever.

From 2020 to 2025, the artificial intelligence industry operated on the assumption that power laws dictated a reliable yield.

Feeding more data and compute into a model was expected to automatically produce smarter results. That period, now defined as the “Age of Scaling,” is over, he said in a recent interview with Dwarkesh Patel.

Promo

Sutskever, co-founder and former chief scientist of OpenAI now pursuing his own venture Safe Superintelligence Inc. (SSI), is a key architect of the deep learning revolution, and definitely worth listening to.

In his interview with Patel he explicitly demarcated the industry’s timeline into two distinct eras. He argued that the strategy of simply scaling pre-training has exhausted its low-hanging fruit.

“Up until 2020, from 2012 to 2020, it was the age of research. Now, from 2020 to 2025, it was the age of scaling… because people say, ‘This is amazing. You’ve got to scale more. Keep scaling.’ The one word: scaling. But now the scale is so big. It would be different, for sure. But is the belief that if you just 100x the scale, everything would be transformed? I don’t think that’s true. So it’s back to the age of research again, just with big computers.”

Dominating the last five years, according to Sutskever, scaling heuristic “sucked out all the air in the room,” causing a homogenization of strategy across all major labs. According to him, “everyone started to do the same thing.”

It is obvious that every major player, from OpenAI to Google, all chased the same curve, assuming that 100x more compute would yield 100x more intelligence.

According to Sutskever, the new “Age of Research” demands a return to architectural innovation rather than brute force.

Yann LeCun, still Chief AI Scientist at Meta but about to leave soon, shares this view, arguing that the current trajectory is asymptotically approaching a ceiling rather than superintelligence.

He insists that “we are not going to get to human level AI by just scaling up LLMs. This is just not going to happen,” as he said in an interview with podacaster Alex Kantrowitz.

The Finite Data Problem: Why ‘More’ Is No Longer ‘Better’

Fundamental to the shift back from scaling to research is a bottleneck that no amount of Nvidia GPUs can solve: the finite nature of high-quality pre-training data. Sutskever notes that “the data is very clearly finite,” forcing labs to choose between “souped-up pre-training” or entirely new paradigms.

And LeCun goes even further with his scathing critique of the current state of the art, describing Large Language Models (LLMs) as sophisticated retrieval engines rather than intelligent agents.

“What we’re going to have maybe is systems that are trained on sufficiently large amounts of data that any question that any reasonable person may ask will will find an answer through those systems. And it would feel like you have you know a PhD sitting next to you. But it’s not a PhD you have next to you it’s you know a system with a gigantic memory and retrieval ability, not a system that can invent solutions to to new problems.”

Such a distinction is critical for understanding the industry’s pivot. A “gigantic memory” definitevely can pass exams by retrieving patterns, but it cannot invent solutions to novel problems.

Nor can it reason through complex, multi-step tasks without hallucinating.

Explaining the stagnation, this flaw prevents models from generalizing outside their training distribution, a limitation that simple scaling can no longer mask.

And without a shift in methodology, the industry risks spending billions to build slightly better librarians rather than scientists.

Enter the ‘Age of Research’: Reasoning, RL, and Value Functions

Sutskever defining the “Age of Research” is a shift from Next Token Prediction to “System 2” reasoning. He points to “Value Functions” and Reinforcement Learning (RL) as the new frontier.

Unlike pre-training, which requires extensive static datasets, RL allows models to learn from their own reasoning traces, effectively generating their own data through trial and error.

He cited the DeepSeek-R1 paper as a prime example of this shift. Utilizing pure RL to incentivize reasoning capabilities without supervised fine-tuning, the model demonstrated that architectural changes can yield gains that raw scale cannot.

Google’s “Deep Think” engine in the Gemini 3 update similarly represents this move toward “test-time compute.” Engineers aim to create models that can “think” for longer to solve harder problems.

By allowing the model to explore multiple solution paths before responding, labs hope to break through the reasoning ceiling that has capped current LLM performance.

Google’s Response: The 1,000x ‘Age of Inference’ Mandate

While AI scientists debate the best path forward, Google is already retooling its entire physical infrastructure for this new reality. An internal presentation from November 6 reveals a “wartime” mandate: double AI serving capacity every six months.

According to the 1,000x growth mandate, the long-term target is a staggering 1,000-fold increase in capacity by 2030.

VP of Infrastructure Amin Vahdat frames this as an existential necessity, noting that infrastructure is “the most critical and also the most expensive part of the AI race.” Driving this expansion is not training (the old bottleneck) but the “Age of Inference.”

State of the art reasoning models like ChatGPT-5.1 and Gemini 3 Pro require exponentially more compute at runtime to explore solution paths.

Unlike a standard search query which costs fractions of a cent, a future “Deep Think” query might run for many minutes or even hours, consuming substantial inference compute.

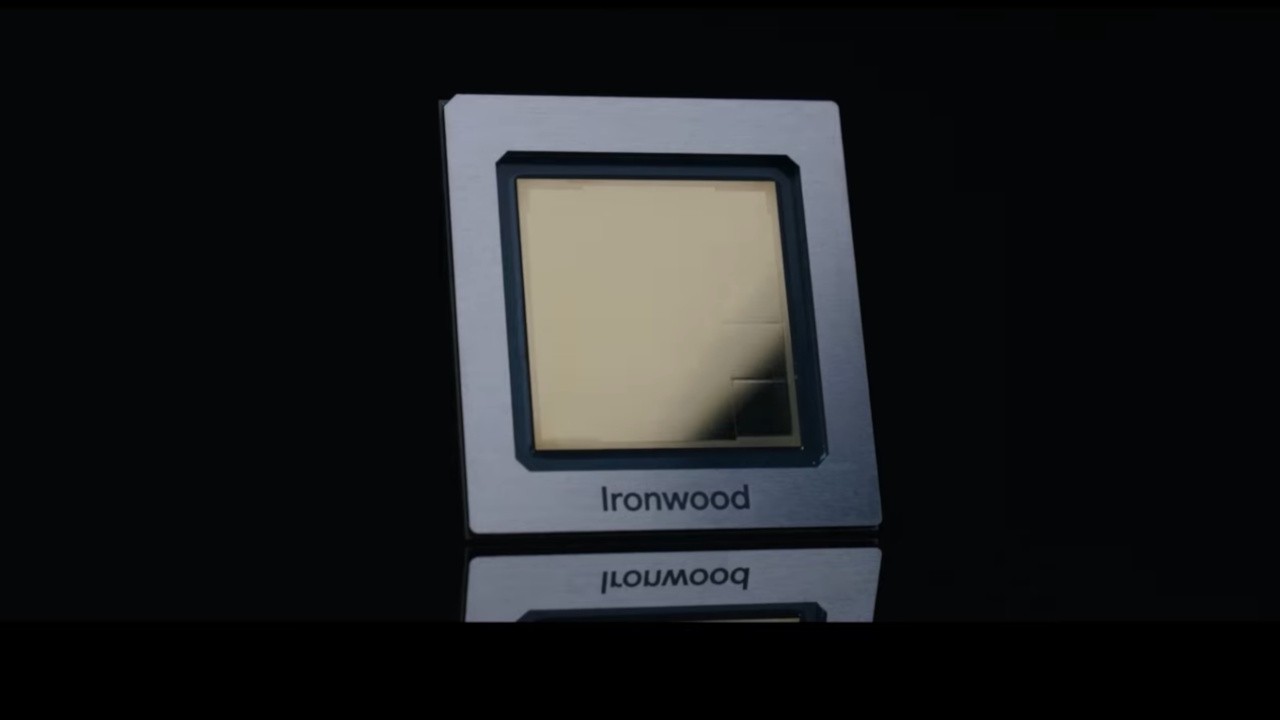

But scaling capacity by 1,000x using standard hardware is economically impossible; efficiency is the only survival mechanism. Therefore Google’s strategy relies on “co-design,” integrating software needs directly into custom silicon with its new “Ironwood” TPU.

Simultaneously, general-purpose workloads are being offloaded to Arm-based “Axion” CPUs to free up power budgets for the power-hungry TPUs.

However, the Jevons Paradox looms large. This economic principle dictates that increasing efficiency drives higher total consumption rather than conservation. As inference costs drop, demand for complex “Deep Think” reasoning will explode.

Consequently, total energy consumption and capital expenditure will likely rise, even as per-unit efficiency improves.

OpenAI’s Cost of Being First

Shifting away from pure scaling has hit the incumbent, OpenAI, the hardest. Following the triumphalism of its 2025 DevDay, the company is undergoing a psychological reset, moving from a default winner mindset to a disciplined wartime footing.

This shift was crystallized in a leaked internal memo from CEO Sam Altman, who explicitly warned staff of “rough vibes” and significant “economic headwinds.”

The memo marks a rare moment of vulnerability for a leader known for relentless optimism, starkly contrasting with the company’s public projection of invincibility. Most alarming is the revised internal forecast regarding growth trajectories.

In a “bear case” scenario, revenue growth could plummet to pedestrian single digits, specifically 5-10%, by 2026. This would represent a catastrophic deceleration from the triple-digit expansion that characterized the ChatGPT boom.

Compounding the revenue anxiety is the company’s staggering burn rate. Projections indicate the company is grappling with a potential $74 billion operating loss by 2028. With profitability previously dismissed by leadership as a secondary concern, the sudden focus on fiscal discipline suggests that investor patience for indefinite losses may be waning as infrastructure costs mount.

The memo also contained a candid concession regarding the competitive landscape. Acknowledging that the technical gap has closed, Altman admitted to employees that the company is now in a position of “catching up fast.”

“Google has been doing excellent work recently in every aspect,” he admitted.

Operationally, this anxiety has manifested in immediate corrective measures. Rumors of a hiring freeze have begun circulating, adding weight to the warning of a more disciplined phase.

Simultaneously, engineering teams are rushing to deploy a new model codenamed “Shallotpeat”. Explicitly aimed at fixing bugs that emerged during the pre-training process, the model represents a critical attempt to stabilize the company’s technical foundation.

The Product War: ‘Delight’ vs. ‘Invincibility’

Google’s Gemini 3 update has successfully shifted the narrative from “catch-up” to “product delight.” Salesforce CEO Marc Benioff’s public defection from ChatGPT is a bellwether event: “I’m not going back. The leap is insane , reasoning, speed, images, video… everything is sharper and faster,” he recently stated.

However, the “Skeptic’s Lens” reveals a fragmented battlefield, not a new monopoly. Anthropic’s Claude Opus 4.5 launch proves that the race is far from over. The model scores 80.9% on SWE-bench Verified, beating both Gemini 3 Pro and GPT-5.1.

This three-way deadlock between OpenAI, Google, and Anthropic proves that no single lab has a permanent “moat.” Raw intelligence is no longer the sole differentiator, but integration and utility.

The Hardware Shock: Nvidia’s Defensive Crouch

And the costly vertical stacks implemented by these players represent an existential threat to Nvidia. Reports that Meta is negotiating to use Google TPUs sent Nvidia stock down in recent days, in spite of record earnings.

If hyperscalers like Meta and Google can rely on their own silicon for the “Age of Inference,” Nvidia might lose its biggest customers.

Breaking from its usual silence, Nvidia felt the need to issue a defensive post claiming to be “a generation ahead of the industry”, insisting that it has “the only platform that runs every AI model and does it everywhere computing is done.”

Such a public rebuttal suggests genuine anxiety about the commoditization of AI hardware.

Google’s ability to offer TPUs via Cloud creates a serious attack on Nvidia’s margins, challenging the assumption that its GPUs are the only viable path to state-of-the-art AI.

Market Reality: Bubble or Metamorphosis?

As juxtaposing “AI Bubble” fears with significant capex defines the current moment, Sundar Pichai has admitted to “elements of irrationality” in the market, while at the same time mandating 1,000x growth.

Rather than a contradiction, this represents a calculation: the “Age of Scaling” bubble is bursting, but the “Age of Inference” economy is just at its beginning.

The winners of the next phase will be those who can sustain the substantial R&D costs of the next “Age of Research” in AI.

Companies relying solely on off-the-shelf hardware and public data (the 2020 playbook) face extinction. The future will belong to those who can build the entire stack, from the chip to the operating system.